输出部分

线性层+softmax层

作用:

通过对上一步经解码器输出的值进行线性变化得到指定维度的输出,也就是转换维度的作用。其中,softmax层的作用是使最后一维的向量中的数字缩放到0-1的概率值域内,并满足他们的和为1。

代码部分

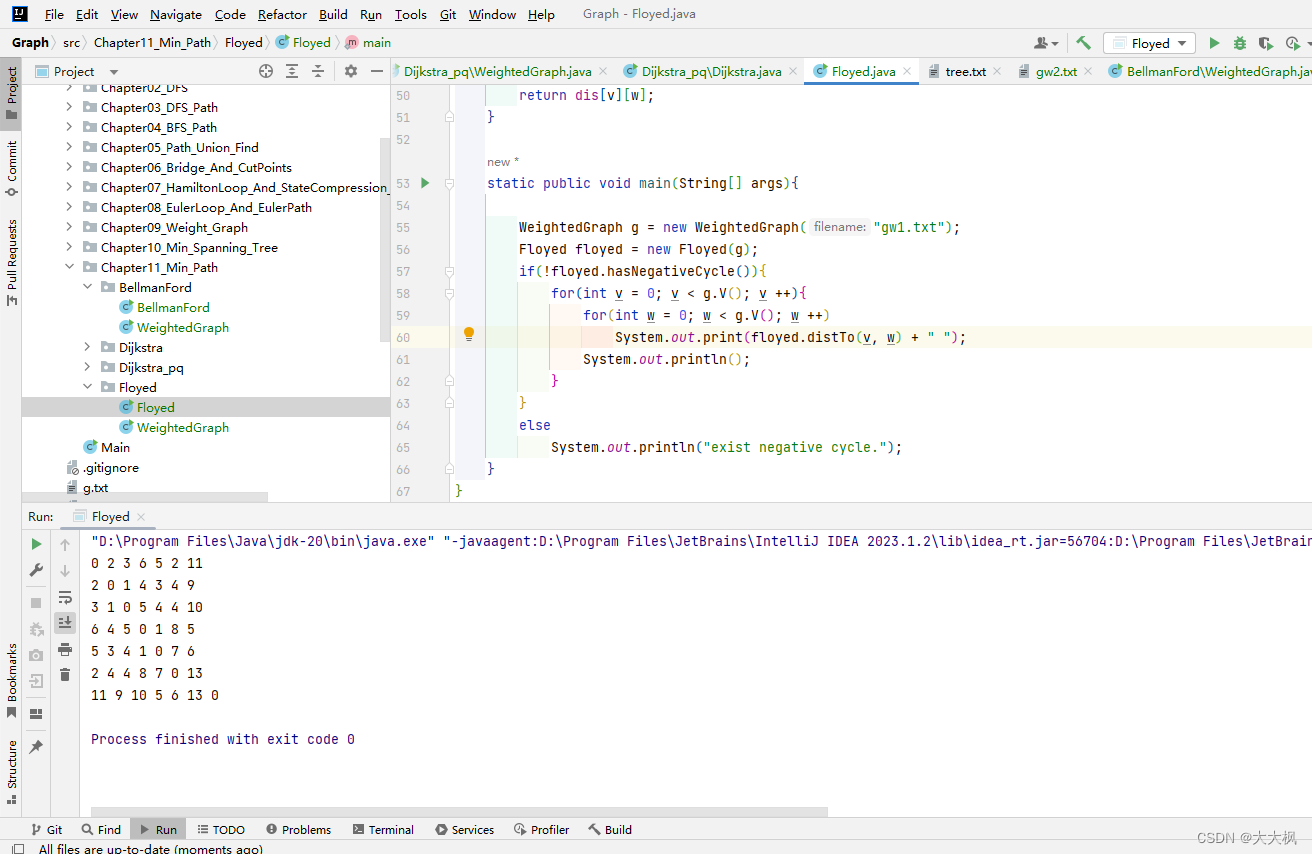

class Generator(nn.Module):

def __init__(self, d_model, vocab_size):

""" 对解码器的输出进行一个线性变换后,

:param d_model: 词嵌入的维度

:param vocab_size: 词表的大小

"""

super(Generator, self).__init__()

self.project = nn.Linear(d_model, vocab_size)

def forward(self, x):

return F.log_softmax(self.project(x), dim=-1)

示例

d_model = 512

vocab_size = 1000

x = de_res

gen = Generator(d_model, vocab_size)

gen_res = gen(x)

print(f"gen_res: {gen_res}\n shape:{gen_res.shape}")

gen_res: tensor([[[-6.4770, -6.4462, -6.7124, ..., -6.2073, -6.9740, -7.7259],

[-6.8274, -7.6112, -6.7717, ..., -6.8232, -6.4413, -6.1669],

[-6.6380, -6.8908, -7.6641, ..., -5.8640, -7.6810, -6.2209],

[-5.7474, -7.2175, -7.1327, ..., -6.5134, -6.8174, -6.8797]],

[[-6.8838, -7.2975, -6.8391, ..., -6.6967, -8.0755, -8.4198],

[-6.9594, -7.3281, -7.3746, ..., -6.5104, -6.5213, -7.0527],

[-6.7861, -6.5134, -6.9520, ..., -6.4404, -7.1817, -7.1947],

[-6.3320, -7.0271, -7.1489, ..., -7.7585, -6.6570, -6.9551]]],

grad_fn=<LogSoftmaxBackward0>)

shape:torch.Size([2, 4, 1000])

下面和经过解码器进行对比

de_res: tensor([[[-0.7714, 0.1066, 1.8197, ..., -0.1137, 0.2005, 0.5856],

[-0.9215, -0.9844, -0.4962, ..., -0.1074, 0.4848, 0.3493],

[-2.2495, 0.0859, -0.7644, ..., -0.0679, -0.7270, -1.3438],

[-0.4822, 0.2821, 1.0786, ..., -1.9442, 0.8834, -1.1757]],

[[-0.2491, -0.6117, 0.7908, ..., -2.1624, 0.6212, 0.6190],

[-0.3938, -0.5203, 0.6412, ..., -0.8679, 0.8462, 0.3037],

[-1.0217, -1.0685, -0.5138, ..., 1.2010, 2.0795, -0.0143],

[-0.2919, -0.5916, 1.5231, ..., -0.1215, 0.7127, -0.0586]]],

grad_fn=<AddBackward0>)